Picture the inside of the campus computer center, circa 1970. No smartphones, no Internet, just a bunch of workstations with teletype machines hooked into mainframes, clickety-clacking away as the machines’ typeballs smack their fonts onto rolls of paper in staccato bursts.

Amid the computer nerds there sits an 11-year-old boy with black curly hair hunched over a keyboard. On weekend mornings, after his family had driven up from central Connecticut to dad’s alma mater for a football game, little Nick Carr would play a different version of the game, punting and passing his way through a rudimentary computer version in which he punched 1 to call a “simple run” or 4 for a “long pass.” He’d hit return and then wait for the algorithm to spit back how many yards had been gained or lost. It wasn’t just for fun—“I also actually figured out the early version of word processing they had on the system,” Carr recalls.

Fast-forward to Carr’s matriculation in the fall of 1977. The whiz kid gravitates not to computer sciences, but to English. This was leavened by an affinity for the Sex Pistols and the Dead Boys: Carr hosted a midnight punk show on WFRD. “That was the only time they’d let me do it,” he recalls. “Some people were a little nervous about it.”

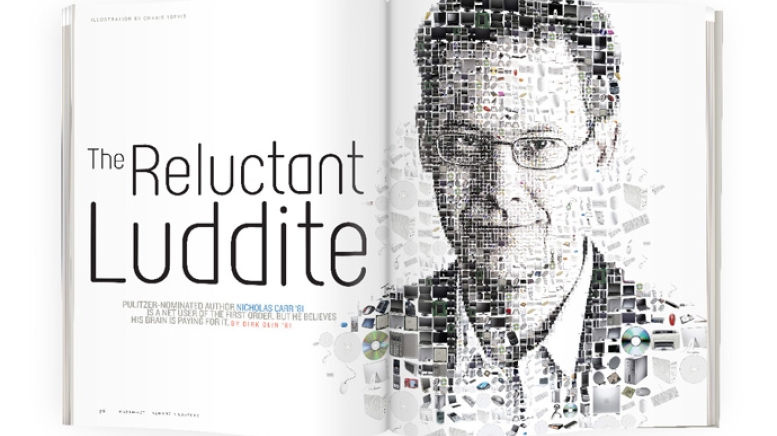

Carr’s biography makes sense in hindsight. Game player, English major, punk—he is now a journalist and leading iconoclast of the information age. He has written three books, with the most recent, The Shallows: What the Internet Is Doing to Our Brains, arguing that online overuse is infecting society with collective attention deficit disorder. The book earned Carr the title of Pulitzer finalist earlier this year.

That thesis “came out of a personal realization,” Carr says. “I came to the conclusion that my brain had been reprogrammed due to my use of the Internet. I just didn’t have the concentration I used to have. And not just with books. I was getting antsy even reading a longish magazine piece.”

But isn’t that what happens as one approaches middle age?

“That was my initial thought,” says Carr. “But a lot of studies show that attention spans for things like reading can increase as you get older. No, I think the Net has retrained me so that I expect to consume information rapidly, jumping around and clicking on links and checking e-mail, even when I’m in the middle of reading something else.”

The point of departure for his current work was formulated as a question—“Is Google Making Us Stupid?”—that headlined his article in The Atlantic in 2008 and led to his Pulitzer-nominated tome. The Shallows offers calm, collected provocation. Slate called the book “Silent Spring for the literary mind.”

“I think of myself as a skeptic,” says Carr. “I’m mostly interested in prompting some reflection. The Internet is woven so deeply into society. How does it influence our sense of self?

“There’s lots about the age of digital information to be celebrated and applauded,” he concedes. “I’m just saying the Net’s dominance comes with a price. Reading is not instinctual. That’s even truer for deep reading, where you make complex connections and experience original insight. It has to be learned. And I think we’re now unlearning some of it.”

The Shallows draws on scholarly research to unwind relevant cognitive science. “There’s not a single experiment that explains all this,” Carr says. “There aren’t a lot of brain scan studies, because it’s hard to replicate computer use, much less reading, when you’re in an MRI machine. But since the 1980s educational psychologists have been studying how people read on a computer. In particular, studies of the influence of just putting links into text—as compared with reading straight text—have consistently found that readers’ comprehension tends to go down even if they don’t click on the links. Every time you see a link, even if you’re not conscious of switching gears, the decision-making and problem-solving parts of your brain kick in. It’s the prefrontal cortex, whereas retention is diffuse, including more of your midbrain.”

It’s not all bad, according to Carr. “The good news is that googling seems to keep your brain sharp, almost like doing a crossword puzzle,” he says. “It’s good to use your prefrontal cortex, but the problem is you lose the calmness you need to make long-term memories or weave the new information into your previous memories and make interpretations.”

The cause is thinkus interruptus, he says, an infantilization of the reader. “Just putting in hyperlinks sends you back to that decoding behavior you did when you were learning to read. On top of that, when you go from images to video to text to sound, you recall less of each. There’s a lot of mental energy that’s used just to shift to the interruption and then reframe your brain to return to the original task,” he says. “Learning declines when kids are instant messaging or workers are checking their e-mail inboxes.”

The phenomenon is compounded by totality. “It’s not just hypertext or the interruptions or the multimedia presentations or the multitasking,” he says, “it’s that all of them are happening all the time. While there’s no killer study yet showing our brain cells are getting reshaped, there’s an overwhelming amount of circumstantial evidence.”

Carr is not without his critics. In a blog, Trent Batson, executive director of the Association for Authentic, Experiential and Evidence-Based Learning, dismisses Carr’s thesis as antediluvian, adding he’s heard “similar warnings” for years. Batson counters that the Internet brings a healthy return to the oral tradition of learning: “We find ourselves creating knowledge continually and rapidly as our social contacts on the web expand. We have rediscovered new ways to enjoy learning in a social setting.”

How did Carr, the English major, come to plumb the depths of these shallows? When he graduated, he was headed for esoterica. He loved Hanover, but an academic sojourn during his junior fall in London (where he met his wife, Ann, who was on the same program during a hiatus from Smith) had helped accelerate his studies. He finished his coursework by senior fall, returning only for graduation ceremonies in the spring. After a year as an editor and thrashing some lead guitar in his own band (“I don’t have any rhythm,” he admits), Carr entered Harvard University’s doctoral program in English literature.

The tweed coat and pipe were not to be. “It was all about deconstruction then,” he says. “It was far too theoretical for my taste.”

Carr got his master’s and, in his early 20s with a baby on the way, was hired as an editor with a management consultancy. In 1997 he moved to the Harvard Business Review, just as the Internet was revolutionizing the workplace. There he threw his first rhetorical bomb in “IT Doesn’t Matter,” a piece that argued that the ubiquity of computing had neutered its value proposition as a competitive advantage. The piece provoked much scorn, with Microsoft CEO Steve Ballmer pronouncing it “hogwash.”

Carr’s resultant book, Does IT Matter?, came out in 2004. This led to even broader success with the 2008 publication of The Big Switch, an early parsing of cloud computing, analogizing the shift to the rise of the electric grid. That computation as utility might carry some negatives was, as usual, tough for some to swallow. Dubbed by some critics as “disturbing” in its distrust of utopian boosterism, others deemed his analysis “moderate” and “reasonable.”

“I think there’s legitimate reason to be fearful now,” Carr says, citing concerns that we may profoundly erode our capacity for depth of thought. “There’s a lot of science on the biological plasticity of our brains, which adapt throughout our lifetimes. And the brain wants to be efficient. So when you’re doing something, the brain essentially recruits neurons that build pathways that support that activity. But as we adapt to this interrupted juggling environment, we’re simply not practicing those ways of thinking that encourage you to think of one thing rather than many things.”

Anticipating Batson’s point about the natural chant-and-response of early societal learning, Carr concedes that quiet, contemplative focus is not the natural way of human thinking. Quite the opposite. “This is speculative on my part, but I don’t think there were many rewards for primitive man to focus on one thing,” he says. “That would lead to maybe missing a source of food. I think our brains are naturally inclined to notice everything around us for signals that help us survive. There is evidence that that is supported by the neurotransmitters in our brains, where you get a squirt of dopamine every time you learn something new. That’s why people, myself included, can be so compulsive about using the Net, because it appeals to that primitive urge we have to pay attention to everything.”

So we’re googling our way back to the future. “Our brains are adapting to the way we’ll be rewarded down the road,” he predicts. “Whether it’s work or socializing or education, more and more rewards are going to go to those who can find and exchange information quickly.”

Carr’s position arguably tracks with the slow food movement. “I’m not sure I’d put it that way, but I see a parallel,” he says. “I’m not making an argument against all of this. I’m just suggesting that data technology is becoming so dominant that we’re losing the opportunity and the encouragement to engage in what I think is the highest form of thought.”

Beyond the cognitive devolution, Carr also sees economic exploitation at work. Indeed, he’s analogized such platforms as YouTube to sharecropping. “You can use its servers and tools, but it takes the commercial value from what you do. The sharecropping analogy sees such sites as similar to the plantation owners after the Civil War, renting people tools and land and then taking the lion’s share of the produce. You could argue that it’s symbiotic, but the two groups are actually operating in two different economies. Internet companies are operating in the old for-profit economies, while the contributors are operating in an ‘attention economy’ or the ‘gift economy’ as it’s often called—which is misleading because it implies money is not involved. When, of course, it is. It’s just being sucked out of these communities by the corporations that are providing the ‘acreage.’ ”

Part of Carr’s personal response to all this, with his son a junior in college and his daughter pursuing a doctorate in comparative literature, has been to decamp with his wife from New England to Boulder, Colorado. He writes a blog, roughtype.com. “I’m hoping to put together a proposal for a new book by year’s end,” he says. “The problem is I don’t have an idea for it yet. I’m hoping to solve that problem during the next few months.” Meanwhile he can devote more time to fly-fishing and skiing—and more contemplation.

Which is all well and good. Except that he replied to a recent Saturday afternoon e-mail within 67 seconds. “Writing The Shallows,” he concedes, “has not changed me quite as much as I might have hoped.”

Dirk Olin is editor and publisher of Corporate Responsibility Magazine. His first book, Rebuilding Justice, will be published this fall.